Please find my new website including an up-to-date list of my publications at michael-schwarz.github.io!

This website will remain online for historic purposes, but will not receive updates.

Please find my new website including an up-to-date list of my publications at michael-schwarz.github.io!

This website will remain online for historic purposes, but will not receive updates.

I had the joy of presenting our paper on Improving Thread-Modular Abstract Interpretation at the 28th Static Analysis Symposium earlier this year. In it, we contrast two different styles of non-relational thread-modular value analysis, formulate them within a common framework, and provide refinements of either style of analysis.

Feels great to put our ideas which we have been working on for almost a year out there in the community!

The full paper can be found here, our (extended) preprint is on arXiv.

So, this one is not about Computer Science, but hear me out! I’ve been a part of the team behind TEDxTUM for almost a year now, so I guess a post about something TEDxTUM related is almost overdue.

Luckily, we just posted two writeups about TEDxTUMs journey towards sustainability: In an attempt to make our efforts in this area more visible, I collaborated with Christoph Berger, Dora Dzvonyar, and Annika Schott to author two Medium articles. And, just like that, I can just ramble here for a bit, add two links, and I have something to post here! 😉

This piece focuses on how we dissected the burritos we had for lunch at the event to learn about the climate impact something as small as one lunch can have. It’s actually surprisingly little, or much, depending on how you look at it!

The second writeup is more or less a list of all the small ways in which we had sustainability on our minds while putting together the event this year. The idea is to be helpful to other organizers also looking at how they can make their events more sustainable and ideally spark a conversation around this topic.

As mentioned above, all of this is joint work with my awesome team mates who deserve at least as much credit as me. However, I personally take all the credit for questionable attempts at humor (and the typos)!

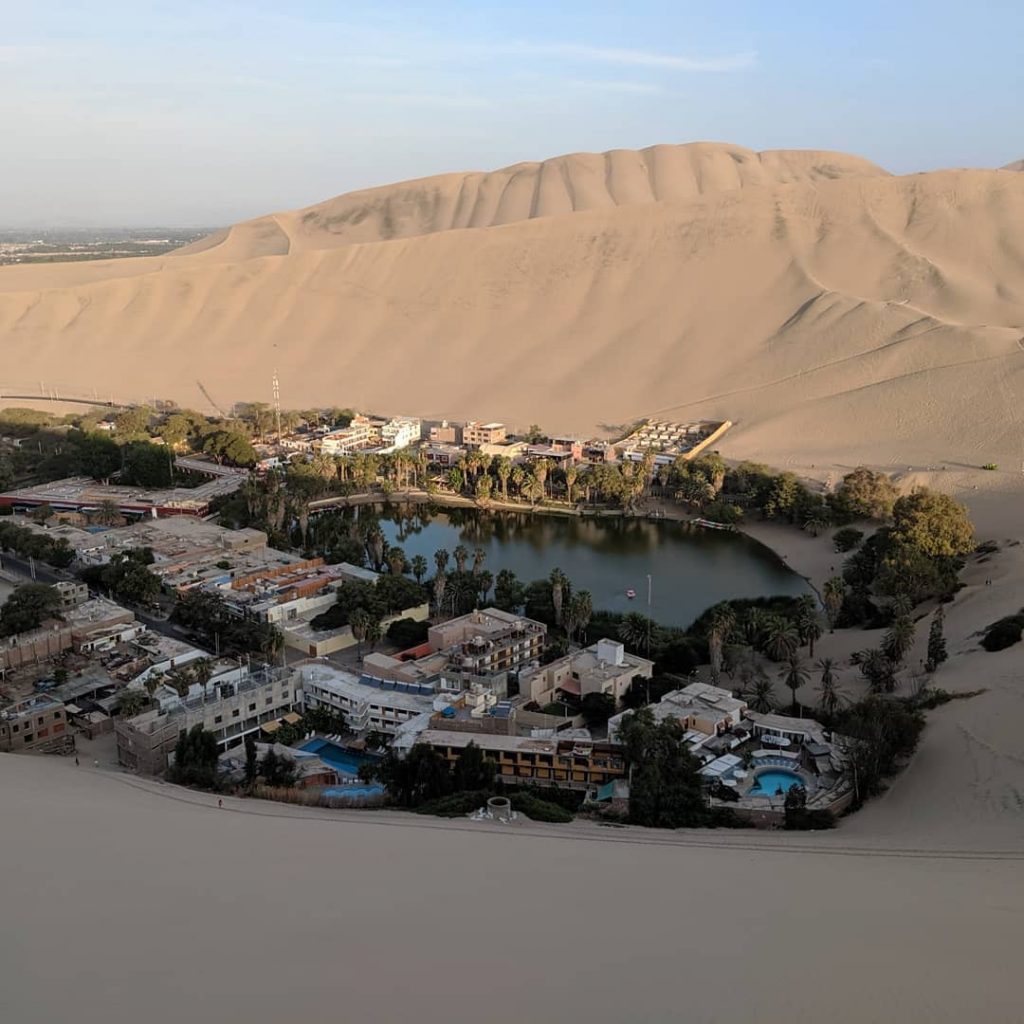

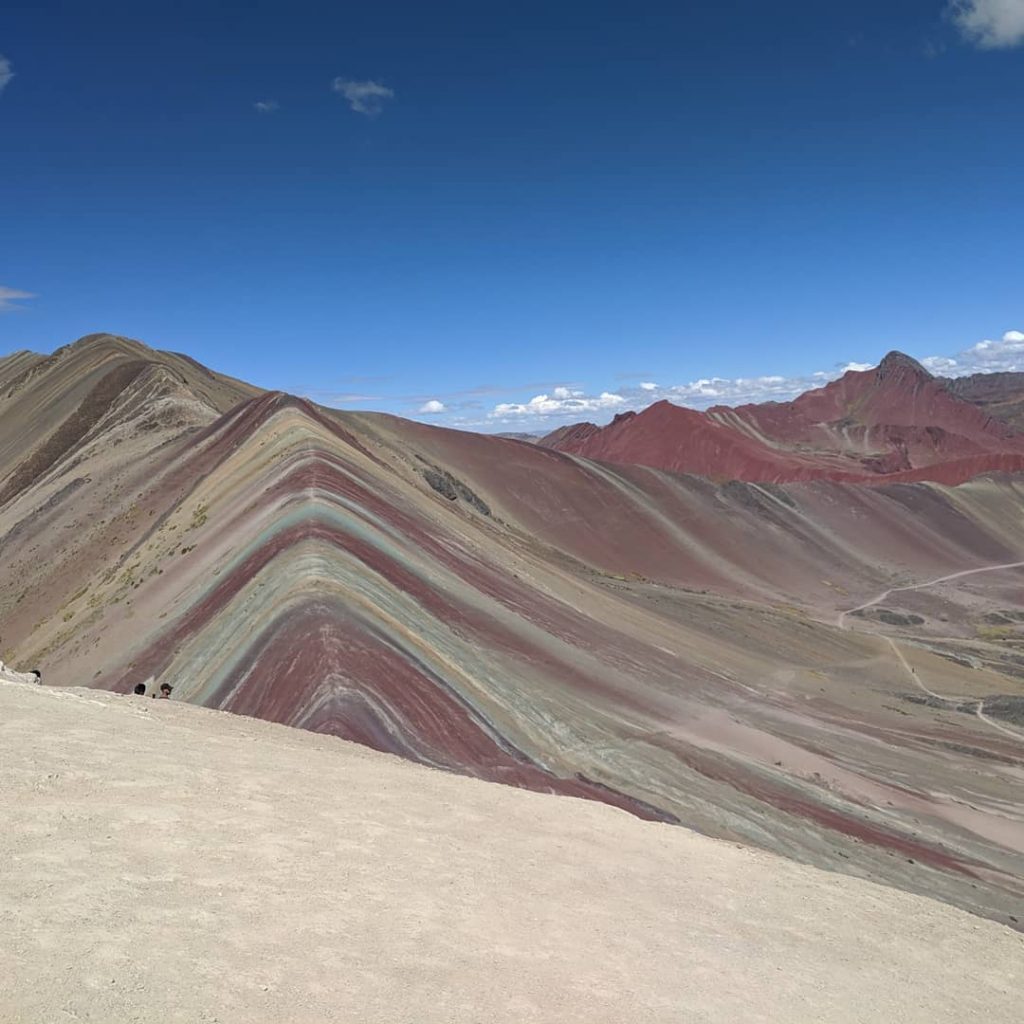

Between the finish of my Master’s and the start of my job, I had the opportunity to travel to South America for the first time in my life. I spent my five weeks almost entirely in Peru, save for a short detour down into Bolivia. While all my friends will testify that I have more stories from these weeks that anyone can bear to listen to, you are in luck because all I’ll post here are some of the most beautiful pictures I took 😉

Earlier this month I had the honor of giving a couple hundred prospective students some insights into what it is like to study at TUM. Before my short talk, some of the student initiatives presented exciting projects they are working on (Hyperloop or rockets, anyone?). Since I am almost done with my studies, I hope I had enough interesting stories to tell 😉 . While I have given talks for school groups quite regularly, this one was special: It is not that often that a mere Master’s student gets to speak in the biggest lecture hall on campus – and to be honest it is a bit frightening and really exciting at the same time.

The talk was part of visiTUM, an initiative initially started by TUM Junge Akadamie that aims to give prospective undergrads an insight into student life at TUM by hearing from an actual student. It is a really rewarding way to be more involved at TUM and help out the next generation of TUM students. The folks in charge of visiTUM are running a workshop every semester, so be sure to check them out if you are a student at TUM looking for an opportunity to volunteer.

In case they are helpful to anyone, here are the slides I used (almost exclusively pictures, so no puns this time I’m afraid 😛 ).

After really loving the experience last time, some friends from TUM and I decided to participate in this year’s edition of Hackaburg (a student-organized hackathon in Regensburg) as well. This time, we were a total of four friends back from undergrad: Ute, Christoph, Robert and me. Ute won last year’s Hackaburg with kooci (a gesture based voice assistant to help even people as untalented as me cook) and Christoph and I won the BMW Innovation Award with our team aMUSEmeasure working with brain waves. For Robert, it actually was his first Hackathon – so we had the perfect mixture of old tired veterans and fresh wide-eyed minds 😉

This year, we once more came without a concrete idea in mind, so while eating dinner we started brainstorming. We decided pretty early on that it would be fun to do something with hardware once more. After going through MLH’s list of hardware, we were intrigued with the myo armband which is able to recognize gestures. We borrowed two of those and started thinking about what we could do with them, settling on two different ideas: Either using them to compose music, or porting everyone’s child-time favorite Rock–Paper–Scissors so you can play it remotely.

Our final product: Employing two myo armbands, we recognize gestures of both hands and link them to music samples. You can compose your own tunes step-by-step: simply select the samples that you feel belong there. In order to make things even more interesting, you can also dynamically control the volume and adjust the speed of your creation by moving your arms up and down.

Without definitely deciding what to do in advance, we set forth and started implementing the parts that would be similar for both ideas. We settled on creating an application for MacOS, mostly because we had two Macs and none of us had ever done anything with MacOS before. Looking back, this might have been the type of decision that ends up costing you a whole lot of time and really putting your patience to the test.

The API for the armband forced us to use C++, while we needed to use Objective C to have access to the APIs which MacOS provides for playing sounds. In principle this sounds simple as Xcode offers support for this scenario. However, when we tried it, we were presented with cryptic error messages that told us we could not assign a RHS to a variable that had exactly the same type – supposedly there was no way to cast between the different types. After a lot of trial and error, we found an article that explained how to correctly wrap our Objective-C code in C++ (using among others the Pointers to Implementation idiom) and we finally got it to run.

As we were rapidly approaching the last 16 hours of the contest, we had a working command line application running that also managed to make some good noises. Nevertheless, we felt like our project would really benefit from a GUI to show the users what the software is doing at any given moment. This was where our decision to target MacOS came back to bite us (not just once but twice).

Our first issue was that once we ported our code over into a GUI project and launched it in a separate thread, it was no longer able to connect to the armbands. At that time, what we did not know was that sandboxing caused this problem. It apparently applies to applications using Cocoa but not to command line tools. So after failing to make progress for a while, we decided we wanted to go for two separate executables as a workaround: One to process gestures, generate sounds and produce text that the other one can parse and display in a nicer way. While building this, I tried mocking the application that handles gestures and sounds by simply getting continuous input from top. The error message we were presented with was about not having sufficient privileges, so after wasting barely 6 hours we were able to get back to our initial setup with just one executable.

Now for the second problem: None of the people still awake at this point had any idea of how the drag&drop based tools for creating interfaces in Xcode worked (they are not as straightforward as it may sound). So everyone slowly lost faith and went to bed accepting the fact that there would be no GUI. Everyone, except for Robert that is, who apparently had a lot of midnight (or 3am 😉 ) oil to burn. When I came back the following morning, he had pretty much solved all the issues we encountered and presented us with a functioning GUI that just needed to be connected to the rest of the application, which we ended up doing in less than an hour.

After achieving this, we finally switched our samples one last time to make them harmonize better. Then, we demoed our application to two judges. (That is one of the things they had changed compared to last year: there were two rounds of judging with the first being more technical and with more time and the second round involving pitching in front of everyone on the big stage). While we were pretty happy with our results, the judges seemed considerably less so and did not even want to try the experience themselves. So we did not have too high hopes that we would make it to the next stage which made us all the more excited when we found out that we had in fact done it. And thanks to Ute’s kickass pitch and Christoph’s musical talents while demoing the experience (and of course also thanks to Robert and me doing what we do best – looking competent) we managed to convince the big jury once more and ended up taking home not just a lack of sleep and a lot of good memories but also some medals 🙂

In case you want to find out more, check out our devpost submission or head straight to GitHub for the code. If you speak German, be sure to give this article in the local paper a read too.

Every spring and fall students from leading technical universities across Europe head to another institution in the ATHENS network to take part in a one week intensive course on a topic of their choice. This March was the second time I had the opportunity to take part in this program. Two years ago I went to Télécom ParisTech to explore how information can be extracted from unstructured data sources such as the Wikipedia. I published a short writeup of that here.

This time around, I went to Technical University of Delft to look into how Data Science can be used to improve Software Engineering. The course was taught by Alberto Bacchelli who is SNFS Professor of Empirical Software Engineering at the University of Zurich and came back to TU Delft to teach two courses during ATHENS week. He is one of the leading figures in the field of Data Science in Software Engineering (also called Empirical Software Engineering) and has a ton of relevant publications.

Continue reading Using Data Science to Improve Software Engineering (or my ATHENS Stay in Delft)

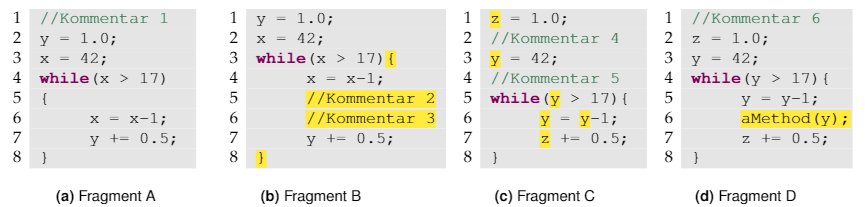

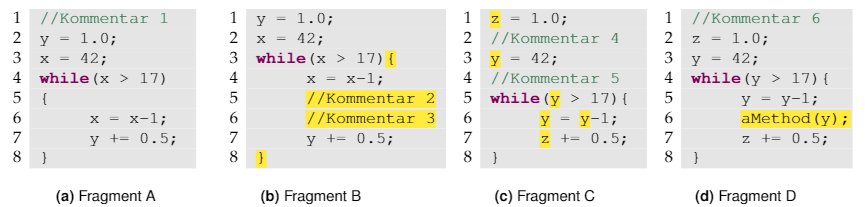

A couple of weeks ago, I published a first article on code clones based on a paper I wrote a couple of terms back in my undergrad. If you haven’t read that one yet, give it a read first. In this part I focus on the different algorithmic approaches that can be used to detect code clones. And once more: All the stuff below is based on the German paper I wrote for a class on Software Quality, which is in turn based on the publications referenced in the paper and at the bottom of this page. Any gross oversimplifications and inaccuracies are entirely my fault.

So, what we have from the last post is an understanding of what code clones are, what different types of clones there are, and an understanding that we should try to avoid them if possible. Now, let’s take a look at how to detect such code clones in a code base (short of hiring someone to read code and just flag clones manually).

To do this we will consider four different approaches in increasing order of abstraction.

I’ve been keeping very busy since I moved to Norway for my internship, but today finally was one of those rainy October Sundays so I had the time to sit down and finally finish this post which I have been meaning to write for a little over a year but never got around to. Well, here it goes: All the stuff below is based on the German paper I wrote for this class, which is in turn based on the publications referenced in the paper and at the bottom of this page. Any gross oversimplifications and inaccuracies are entirely my fault.

Way back in the 6th semester of my Bachelor’s at TUM I had the opportunity to take a seminar class focusing on software quality. The general idea was to get a better understanding of what good code quality is and go beyond the notion of “a good developer know good code when they see it” and also to investigate if there are metrics that might be interesting. My topic was code clones and I was working under the supervision of a researcher at TUM who has published on exactly this topic.

First up, let’s define what we mean by Code Clone. One definition seems immediately obvious:

“Code Clones are segments of code that are similar according to some definition of similarity.” – Ira Baxter

However, that doesn’t really help us if we want a computer to analyze code to find clones. So let’s take a look at a definition used in literature that we can throw at a computer:

I guess it is example time!

Continue reading Copy Pasta and its effects on Code Quality (a.k.a. Term Paper on Code Clones)

Just a few weeks after coming back from my time abroad, I found myself travelling again for the weekend: In Regensburg (about 1h from Munich), Hackaburg was taking place for the second time. It is a student-run hackathon at OTH Regensburg. I had a blast there the last time, so I naturally decided to go this year as well.

Unlike most of the previous hackathons I attended, we already formed a team prior to the event – all of us Master’s students at TUM. Combined, our team has a little under 20 years of study time at TUM under the belt (which makes me feel kind of wise but also really old 😉 ).

The event kicked off with keynotes by sponsors and pitches by a couple of other participants looking for team members. Soon after, our team was sitting in a bar in downtown Regensburg, trying to think of what we wanted to build. While we had a bunch of decent ideas, none of them quite felt right. So, we decided to start over with a fresh mind the following morning.

Continue reading Hackaburg 2017 – Or Measuring Concentration for Fun and Profit